The Extended Berkeley Packet Filter (eBPF) is growing in popularity for its extensibility and observability into the Linux kernel. eBPF is native to Linux operating systems, and a welcomed tool as Linux accounts for 90% of the public cloud workload. eBPF is an enhancement of the original Berkeley Packet Filter (BPF) initially developed for BSD.

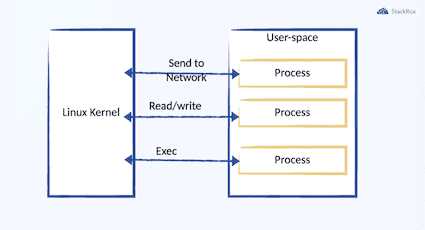

Working with the Linux kernel is necessary when implementing security and observability features, but interaction at scale has its drawbacks. Linux has layers of abstraction that can be difficult to debug, and applications must connect to the Linux kernel from the user space, which is slower and provides less insight into the kernel space.

eBPF has many advantages over traditional Linux observability methods. This blog will cover the history, uses cases, and how eBPF works. At the end of the blog, there is a list of resources to help you find more information and eBPF examples. If you wish to watch a segment on eBPF, you can catch a recent Office Hours segment with Robby Cochran and Andy Clemenko.

What is BPF, and what is the difference between BPF and eBPF?

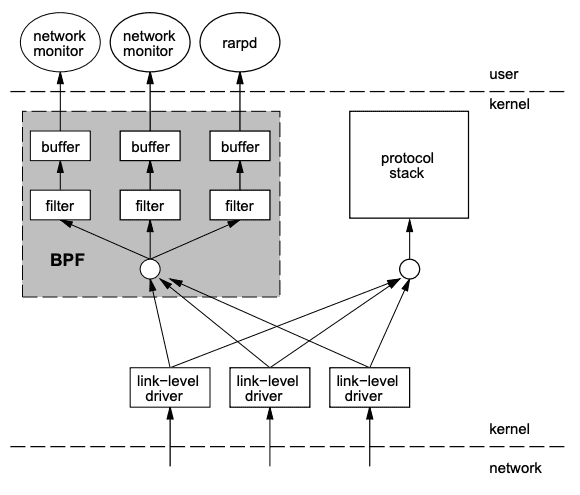

The development of eBPF can be attributed to Steven McCanne and Van Jacobson, who first published a paper on the subject in 1992. At the time, it was named the BSD Packet Filter due to its development on BSD. As the application became more widely adopted, it was renamed the Berkeley Packet Filter due to its development at the Lawrence Berkeley Laboratory.

BPF has been around for many years without fame, although other applications have used it. For example, tcpdump is built on top of BPF, and the BPF program can be viewed using the -d flag.

sudo tcpdump -d "tcp dst port 22"

(000) ldh [12]

(001) jeq #0x86dd jt 2 jf 6

...

(014) ret #262144

(015) ret #0It wasn’t until Alexei Starovoitov altered BPF and created the extended BPF to leverage advances in modern hardware.

Since the addition of eBPF to the Linux kernel in December 2014 (v3.18), there have been a few upgrades. eBPF can be used for non-networking purposes, such as for attaching eBPF programs to various tracepoints. Since kernel version 3.19, users can attach eBPF filters to sockets, and, since kernel version 4.1, to traffic control classifiers for the ingress and egress networking data path.

Why is eBPF useful?

When your application requests data from the kernel, the data from the kernel space must be copied into user space. There are also limits on the kernel information accessible as resources are not freely available in kernel space. This limitation is because the operating system strictly partitions regions of memory used for the kernel, so it’s not possible to give a user space program a pointer to some part of kernel memory.

This results in user space applications having to copy information from the kernel before performing any desired functions. This copy operation, operations like these can have pretty significant performance implications. Containers have increased the density of processes that may run on a host. Each process may be unique and requires individual security or monitoring considerations. The copy process from the kernel space to the user space may not seem like much on a single host. However, as you scale out the collection of the kernel space data, the performance overhead will become more significant.

Instead of duplicating the data, BPF and eBPF create filters directly in the kernel.

There are alternatives to eBPF to extend the Linux kernel such as a kernel module. Robby Cochran summarized it succinctly in our Office Hours:

“An easier and alternative method to extend the Linux kernel is to write a kernel module. Now a kernel module is a way to essentially inject code into the Linux kernel and have a way to extend whatever feature you want … but in order to do that, you have to tie it directly to the version of the kernel and the kernel is changing constantly.

So kernel modules have their own overhead and issues. So what eBPF allows us to do is essentially extend the Linux kernel without submitting patches, without writing a kernel module, and do extend the Linux kernel in ways that are it’s safer. And it’s easier to do continuous delivery as the kernel changes.”

The goal of BPF was to filter out unneeded packets and retrieve only those packets that are relevant. These packets could then be queried from the user space as needed, leading to a significant reduction in overhead. Thes eBPF uses the same event-driven architecture as BPF. eBPF programs can be triggered based on information that the users wish to monitor. Different hook points can be configured to start based on what you want to observe. The execution of the kernel routine at a specific memory address, the arrival of a network packet, or invocation of user space code are all examples of events trappable by attaching eBPF programs to kprobes, XDP programs to packet ingress paths, and uprobes to user space processes respectively.

eBPF alternatives

There are alternatives to eBPF to extend the Linux kernel, such as a kernel module. Robby Cochran summarized it briefly in our Office Hours:

“An alternative method to extend the Linux kernel is to write a kernel module. Now a kernel module is a way to essentially inject code into the Linux kernel and have a way to extend whatever feature you want … but to do that, you have to tie it directly to the version of the kernel, and the kernel is constantly changing.

So kernel modules have their overhead and issues. So what eBPF allows us to do is essentially extend the Linux kernel without submitting patches, without writing a kernel module, and extending the Linux kernel in ways that are safer. And it’s easier to do continuous delivery as the kernel changes.”

The reliability of the interaction between the user space and the kernel is critical to supporting applications. While kernel modules may be more configurable, they come with a high maintenance cost.

What can eBPF do?

Networking

The original purpose of BPF remains prevalent in eBPF today. The initial efficiency has been upgraded to take advantage of 64-bit architectures, with 10 registries and an increased number of opcodes. The speed and programmability of eBPF make adding additional parsers and logic scalable and straightforward. For networking solutions that need scalability, eBPF is an efficient fit to build your user space programs on top of.

Tracing & Profiling

eBPF decoupled the original subsystem that was focused initially on packet filtering. eBPF programs can be attached to a tracepoint or a kprobe. This extra functionality grants more context and allows greater instrumentation and performance analysis in the user space. Advanced statistical data structures will enable the extraction of meaningful data efficiently, without requiring the export of vast amounts of sampling data as typically done by similar systems.

Observability & Monitoring

With the additional functionality, eBPF enables in-kernel aggregation of custom metrics. eBPF doesn’t give you all of the answers, but it will provide Latency: time to service a request Traffic generation: communication with other services Error: how often errors occur Platforms can combine this information with existing tooling to collect CPU, memory, or disk usage, yielding greater context into individual processes. This flexibility allows for rich interfaces and service mapping and can be displayed alongside traditional performance metrics users expect.

Security

Understanding system calls and how applications behave at runtime are core to securing systems. Combining syscall collection with packet and socket level networking operations, you get a rich view of the lifecycle and interaction between processes. Security systems use to handle each of these aspects separately, and now you can take system call filtering, network-level filtering, and process context tracing, eBPF from a single viewpoint.

Wrapping Up

I hope you’ve got at least a baseline understanding of what eBPF is and why it is becoming increasingly helpful in managing our cloud workloads. Below is some extra information from our Office Hours that I hope you take advantage of.

Repository Location

Guides and Documentation

- https://ebpf.io/

- https://facebookmicrosites.github.io/bpf/

- https://docs.cilium.io/en/stable/bpf/

- http://www.brendangregg.com/index.html

- https://www.kernel.org/doc/Documentation/kprobes.txt

eBPF Development

- https://github.com/libbpf/libbpf

- https://github.com/iovisor/kubectl-trace

- https://github.com/iovisor/bcc

- https://github.com/iovisor/gobpf

- https://nakryiko.com/posts/bpf-portability-and-co-re/

Tools and Platforms using eBPF

- [security] https://falco.org/

- [security] www.stackrox.com

- [security] https://github.com/aquasecurity/tracee

- [networking] https://cilium.io/

- [visibility] https://osquery.io/

- [debugging] https://github.com/iovisor/kubectl-trace

- https://www.kernel.org/doc/Documentation/trace/tracepoints.txt

- https://suchakra.wordpress.com/2015/05/18/bpf-internals-i/

- https://jvns.ca/blog/2017/07/05/linux-tracing-systems/